- AI Solutions

- Industries

- AI Projects

- Knowledge

- About us

Question Answering Systems For Specialist Publishers

Provide interactive specialist publications with AI

Retrieval Augmented Generation (RAG): Chatting With Your Own Data

Want to develop new offers and services? Achieve greater engagement and strengthen loyalty to your content? Whether you have specialist articles, books, databases or archives, our RAG-based solutions transform your content and data assets into interactive, dynamic sources of knowledge.

Our question-and-answer systems are ready to use immediately, can be seamlessly integrated via standard APIs and flexibly enriched. You control content precisely by topic and domain – for answers that match your area of expertise exactly.

Make your knowledge accessible in natural language: interactive AI assistants answer user questions in real time – context-sensitive, reliable and based on your internal and external content.

At the same time, you gain valuable insights directly from usage: performance KPIs, transparent usage patterns and qualitative insights from real questions and topics of interest to your target groups.

Why RAG And Question Answering Systems? Because Facts Matter!

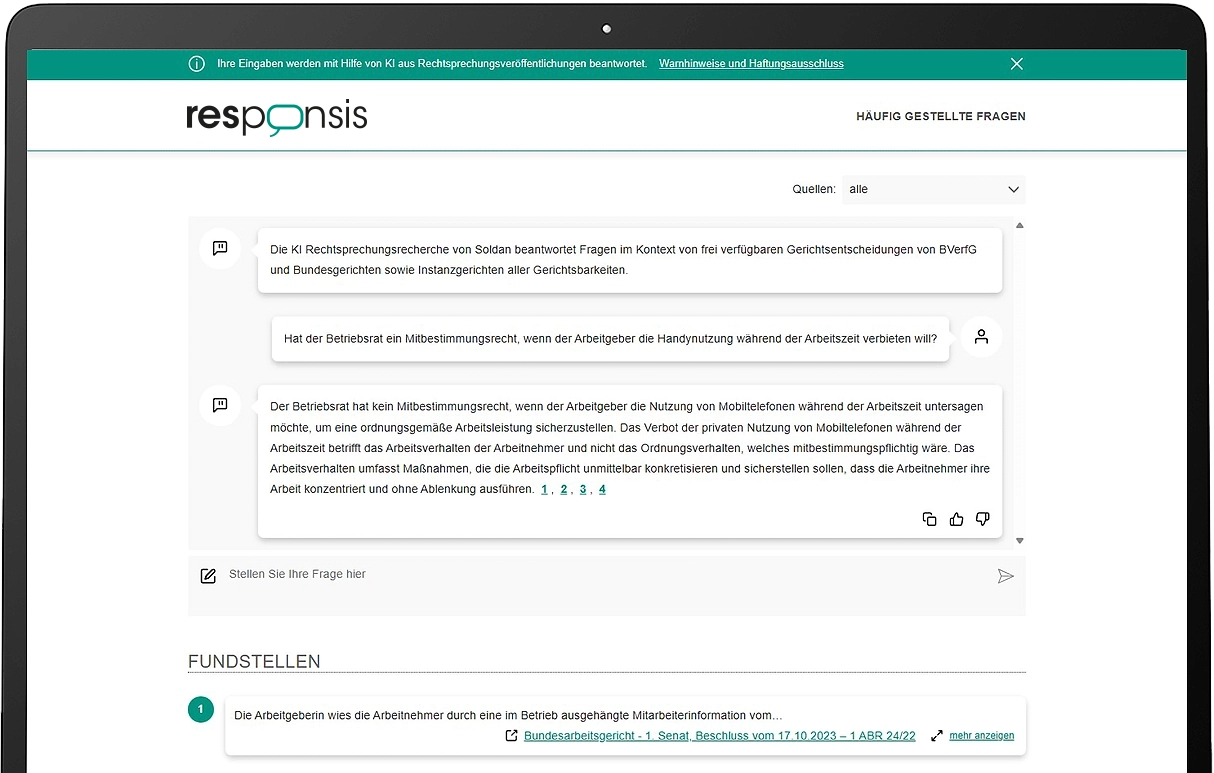

Generalist Chatbots like ChatGPT are impressive – but they often distort or invent content. For specialist publishers who rely on precision and reliability, this presents a real challenge. Our RAG-based question answering solution combines the power of generative AI with the security of your own data sources: Before generating a response, the system specifically searches your data and content pools – and formulates reliable, context-sensitive answers in natural language based on that information.

The key advantage: Our solution combines generative AI with semantic search, neural retrieval methods, and powerful parsing. This enables the system to identify even deeper semantic relationships in complex data sources – whether specialist articles or archival material.

How A RAG-Based Question Answering System Works In Practice – Using The Example Of A Specialist Publisher

A user is searching for up-to-date information to develop their B2B marketing strategy, focusing on paid newsletters and the use of AI agents. Previously, they would have had to sift through numerous magazine archives, documents, and keyword lists – with some luck, relevant articles for their specific question might have been found.

With an RAG-based question-and-answer system, things work differently: the user asks the system their question – and receives a concrete, comprehensible and flawless answer in a matter of seconds. To do this, the generative AI searches the internal publishing archive, existing specialist publications and special editions, filters out relevant content and formulates an individual answer – comprehensible, precise and to the point. The quality of the content is always guaranteed: all desired content is searched and prepared to fit the specific requirements.

And even better: The question-answer system not only delivers precise answers but also directly links to the relevant articles, points to related editions, and can specifically offer special publications for purchase or as subscription upgrades. This creates entirely new user experiences – personal, interactive, and conversion-driven. The RAG system becomes the digital sales assistant for content offerings!

Use Cases With RAG-Based Question Answering Systems

The use cases for RAG and our knowledge-based systems are diverse:

Question Answering Systems With RAG: Making Expert Knowledge Efficiently Accessible

With our RAG-based AI applications, you make your expert knowledge accessible on demand:

Why choose a question answering system from Retresco?

|

Retresco’s RAG-based system

|

ChatGPT & comparable systems

|

||

|---|---|---|---|

| Architecture | Agentic AI: Multi-level orchestration of retrieval, reasoning, and generation for topic-specific information hubs, including internal and external databases and APIs as sources for answering user questions. | Conventional LLM prompting or simple RAG: retrieval and generation mostly linear without agentic control logic | |

| Retrieval processes | Context-sensitive selection of sources, document types and response formats depending on user intent | Predefined search or embedding strategies with limited context differentiation | |

| Data integration | Seamless integration into CMS, archives, paywalls, content hubs and knowledge systems via API | Integration dependent on platform features or individual in-house developments | |

| External data sources | Agent-based API integration of external data (e.g. databases, events, standards, statistics) for maximum response depth | External data only through plugins/tools or manual integration, usually without orchestrated agent logic | |

| User interaction | Dialogue system with feedback loops, ratings and continuous optimisation based on user interactions | Classic chat dialogue without integrated feedback or optimisation module | |

| Chat history | Structured, nameable chat histories with retrievability and knowledge storage | Session-based history without structured knowledge organisation for specialist contexts | |

| Contextual understanding | Deep domain understanding through semantic search, source validation, and multi-level answer derivation | Context-dependent on prompt & training data, limited domain specialisation | |

| Content quality | Reliable answers from curated specialist content with source references and human-in-the-loop processes | Depends on retrieval setup or model training, transparency varies | |

| Personalisation | Domain, title, target group and product-specific configuration for specialist publishing offers | Personalisation usually only via prompting or generic system parameters | |

| Automation | Automated content weighting and prioritisation according to editorial and strategic guidelines | No integrated editorial control logic | |

| Scalability | Optimised for large specialist content inventories, structured data and multi-format output | Scaling depending on platform limits & context windows | |

| Analytics & Insights | Detailed usage, topic and question analyses, including performance visualisation or API export | Usage analyses are platform-dependent and rarely evaluable from a technical perspective. | |

| User feedback | Integrated user feedback for continuous quality improvement and optimisation | Feedback not systematically integrated into knowledge base | |

| Design | Adaptive chat widget: Fully customisable to publisher UX/CD & brand-compliant integration with logos, colours, typography, labels & disclaimers | Standard UI or custom development required | |

| LLM integration | LLM-agnostic: Use any open-source or proprietary models depending on the use case | Tied to platform or provider models | |

| Multilingualism | Technically optimised multilingualism, including SEO localisation and semantic entities | Multilingualism depends on the model, limited SEO fine-tuning | |

| Further development | Industry-specific feature roadmap for specialist publishers | Platform roadmap without publishing specialisation | |

| Support | Personal support from AI, SEO and specialist publishing experts in the DACH region | Generic online support without specialist knowledge | |